To use DataGrip, you will have to buy it from JetBrains. From this window, select Do not import settings and then click on OK.

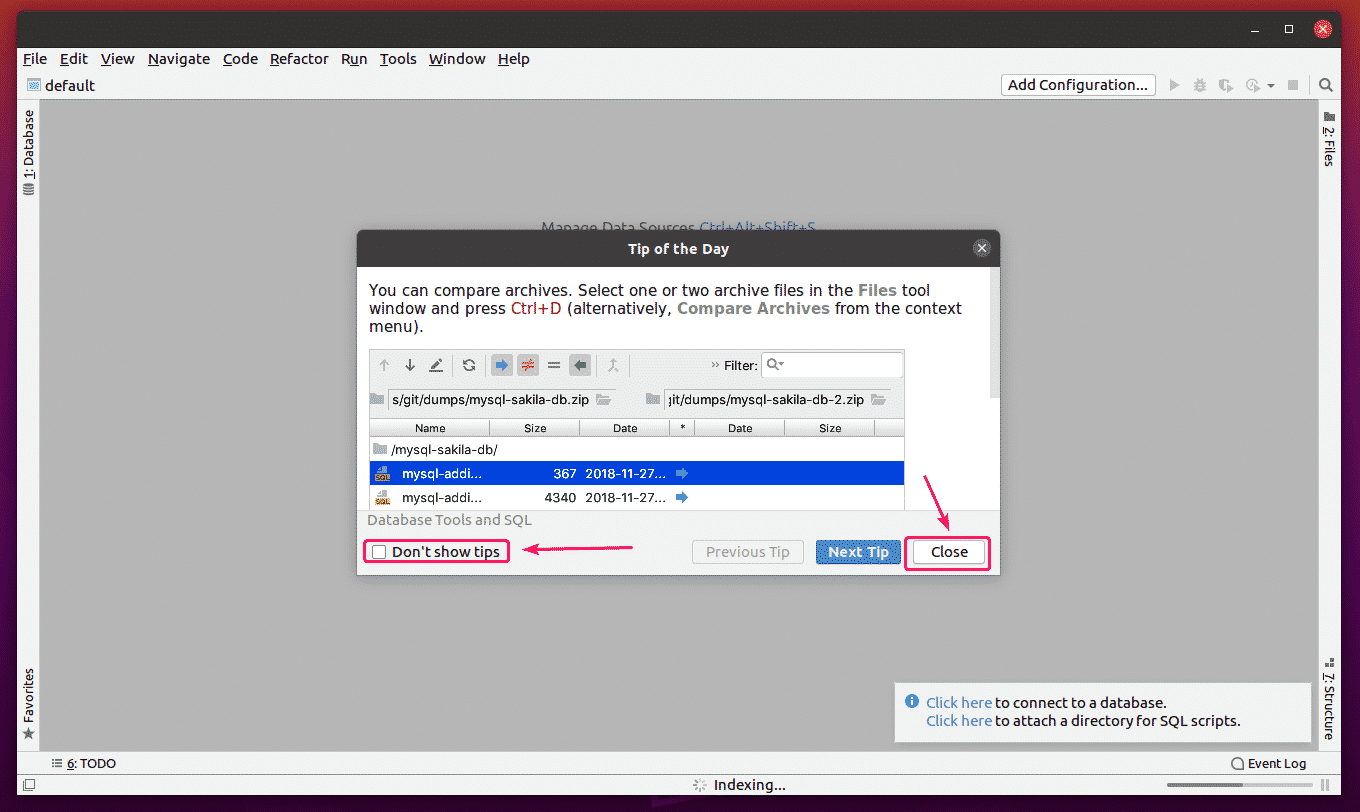

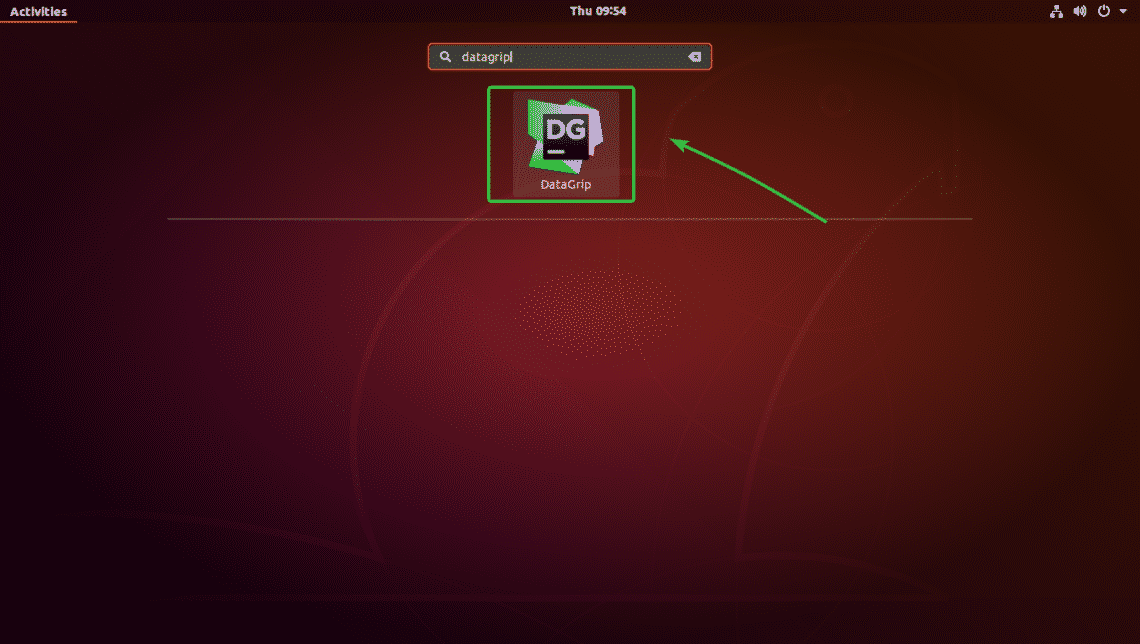

Just click on it.Īs you’re running DataGrip for the first time, you will have to do some initial configuration. Search for datagrip in the Application Menu and you should see the DataGrip icon. Now, you can start DataGrip from the Application Menu of Ubuntu. Feel free to post about any issues you face while setting the driver up in the comments section here or in the Github issues of the project.As you can see, DataGrip is being installed. All these in the comfort of a familiar tool that can serve as a client for many different data source types, Datagrip. In this tutorial we went through a simple workaround that enables data consumers of an Apache Druid cluster, execute queries using Druid’s SQL query language. The absence of auto-limit option could be disastrous leading to querying heavy loads of data and even render Historical node unavailable.Currently there is an open issue on the ability to add custom dialects in DataGrip. The main drawback of this workaround is the fact that by using Generic SQL dialect autocomplete is not available and keyword highlighting is limited to classic functions such as count.I haven’t yet tried to connect to a Broker node using TLS but I think it won’t be a problem as it is possible while using the Avatica driver with Tableau. Go to tab Options and there in the Other section select Generic SQL from the drop down menu.Add a URL template, name it default and set value to:.Select .remote.Driver in the Class drop down menu.Go to Driver Files hit + button and upload all the jar files which can be found in the jars folder of my Github repo.In the new window change Name field of the driver to Avatica Apache Druid (or anything else you want to recognize it).Navigate to File > New > Driver, to open Data Sources and Drivers window.

It was the best solution as it was easy to communicate with other team members and didn’t make us use a new tool and spend even more time to get accustomed with it.Įnough with the back story let’s open DataGrip to create a Driver and a Data source in order to query Druid. So I looked into the custom Drivers that DataGrip offers and by adding Avatica connector jar along with some others I got a working driver. I use regularly DataGrip in order to query different database systems as it has support for a vast amount of them. My team had already used Apache Calcite Avatica’s JDBC driver to connect Tableau to Apache Druid with success. At first I thought that using Apache Superset’s SQL lab which is backed by pydruid Python library could do the job for me, but it was clearly an overkill as I didn’t intent to use any other of Superset’s features. While searching for a solution in the web I couldn’t get to find a convenient one. While moving to production would make things even worse in order to manage the users and finally take the non admins out of the Druid Router’s UI. Querying Druid using a third party client application became a necessity as more and more of my colleagues needed to get access to our Druid data sources during the development stages. In this story I want to share a way to create a DataGrip Driver that is able to connect to a Druid cluster and perform SQL queries. The experience of this process will be covered hopefully in future stories. In the last few months I have been working on the deployment of an Apache Druid cluster for the first time in a production environment. Τhis article was first published in Libra AI Technologies blog.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed